HP Integrity Virtual Machines (HPVM) is a

virtualization solution for Itanium-based servers.

I started this project in late 2000 and left the team

in early 2010, making it the project on which I

spent the most time in my professional life. It

was also the most complex one, being en

enteprise-grade virtualization solution for

Itanium processors (not the simplest CPU

architecture to virtualize).

For this project, I was fortunate to get the early

help of two HP system software gurus, Todd Kjos

and Jonathan Ross. The three of us got the

software to the point of booting 4-way HP-UX guests.

Then, we got a much larger team, mostly composed of

former Tru64 and OpenVMS wizards who helped us

turn this prototype into a real product. The team

was constituted of remarkably bright people, some

who had been working on operating systems such as

TOPS-10, TOPS-20 or OpenVMS long before I even

knew what a calculator was.

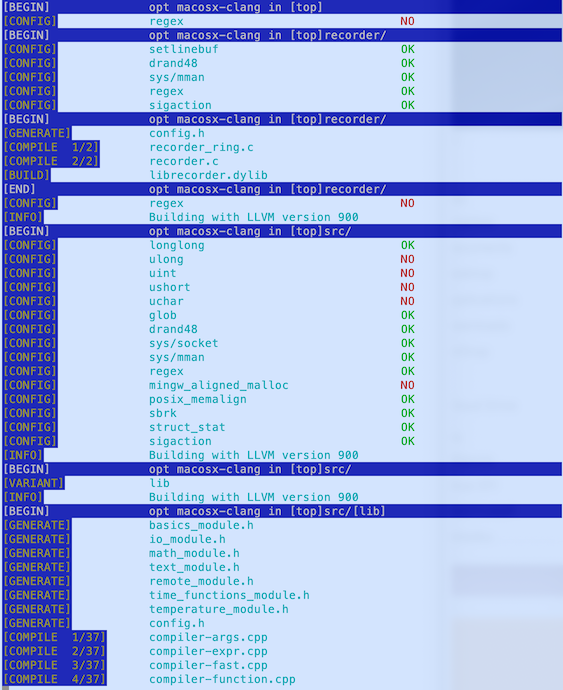

In addition to the early design of HPVM, I

contributed to many facets of the product:

low-level interrupt handlers; binary translation;

memory management; interrupt handling; interrupt

emulation; context switching; networking and

disk I/O; debugging tools; scheduling;

scalability; testing; user-space management tools;

and I probably forget a few. That was a lot of

fun, many interesting problems, and interaction

with a number of super-smart people, like Karen Noel,

who besides doing most of the work for on-line

guest migration, also tried (and failed) to

improve my OpenVMS and English skills.

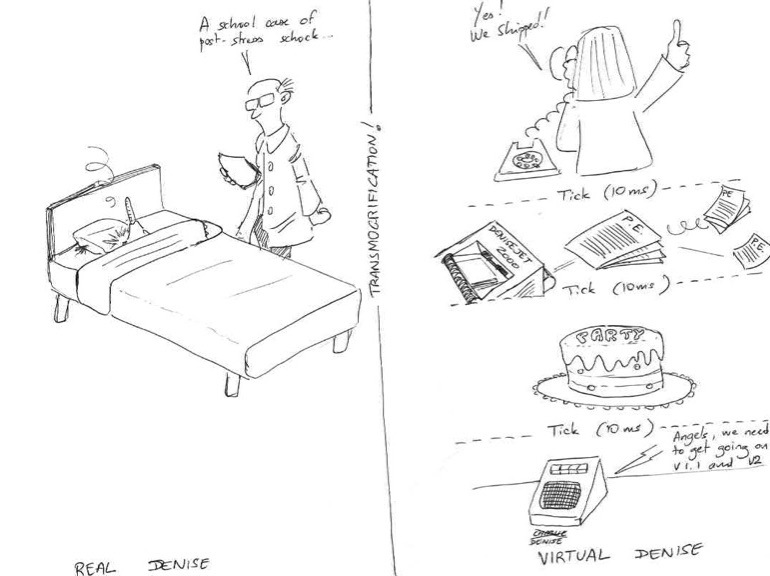

HPVM uses HP-UX as its "management console", but

also inherited its remarkable I/O capabilities. I

called the context switch between the HPVM monitor and HP-UX

"transmogrification" (abbreviated Xmog), a reference to

Calvin

and Hobbes, hat tip from a french comics lover

to Bill Watterson.

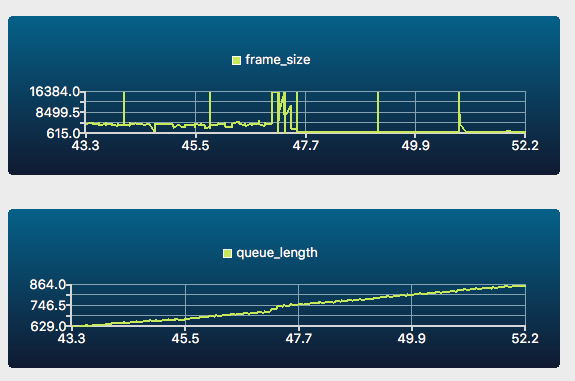

HPVM offered

very good scalablity

thanks in large part to scheduling work done by

Scott Rhine. It also featured high-level management

tools, para-virtualized I/Os, on-line guest

migration, on-line addition and removal of

devices, RAM and even CPUs, cluster integration,

and many more things that are just starting to

show up in open-source virtualization tools.

I feel really bad for not naming all the

individuals who contributed to HPVM, but they know

who they are, and they know I remember them all fondly.

HPVM on Wikipedia